-  +

+

👆 点击观看麦麦演示视频 👆 @@ -37,12 +115,6 @@ > - 由于持续迭代,可能存在一些已知或未知的bug > - 由于开发中,可能消耗较多token -## 💬交流群 -- [一群](https://qm.qq.com/q/VQ3XZrWgMs) 766798517 ,建议加下面的(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 -- [二群](https://qm.qq.com/q/RzmCiRtHEW) 571780722 (开发和建议相关讨论)不一定有空回复,会优先写文档和代码 -- [三群](https://qm.qq.com/q/wlH5eT8OmQ) 1035228475(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 -- [四群](https://qm.qq.com/q/wlH5eT8OmQ) 729957033(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 - **📚 有热心网友创作的wiki:** https://maimbot.pages.dev/ **📚 由SLAPQ制作的B站教程:** https://www.bilibili.com/opus/1041609335464001545 @@ -51,9 +123,17 @@ - (由 [CabLate](https://github.com/cablate) 贡献) [Telegram 与其他平台(未来可能会有)的版本](https://github.com/cablate/MaiMBot/tree/telegram) - [集中讨论串](https://github.com/SengokuCola/MaiMBot/discussions/149) -## 📝 注意注意注意注意注意注意注意注意注意注意注意注意注意注意注意注意注意 -**如果你有想法想要提交pr** -- 由于本项目在快速迭代和功能调整,并且有重构计划,目前不接受任何未经过核心开发组讨论的pr合并,谢谢!如您仍旧希望提交pr,可以详情请看置顶issue +## ✍️如何给本项目报告BUG/提交建议/做贡献 + +MaiMBot是一个开源项目,我们非常欢迎你的参与。你的贡献,无论是提交bug报告、功能需求还是代码pr,都对项目非常宝贵。我们非常感谢你的支持!🎉 但无序的讨论会降低沟通效率,进而影响问题的解决速度,因此在提交任何贡献前,请务必先阅读本项目的[贡献指南](CONTRIBUTE.md) + +### 💬交流群 +- [五群](https://qm.qq.com/q/JxvHZnxyec) 1022489779(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 +- [一群](https://qm.qq.com/q/VQ3XZrWgMs) 766798517 【已满】(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 +- [二群](https://qm.qq.com/q/RzmCiRtHEW) 571780722 【已满】(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 +- [三群](https://qm.qq.com/q/wlH5eT8OmQ) 1035228475【已满】(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 +- [四群](https://qm.qq.com/q/wlH5eT8OmQ) 729957033【已满】(开发和建议相关讨论)不一定有空回复,会优先写文档和代码 +

+

+

+

+  +**也感谢每一位给麦麦发展提出宝贵意见与建议的用户,感谢陪伴麦麦走到现在的你们**

+

## Stargazers over time

-[](https://starchart.cc/SengokuCola/MaiMBot)

+[](https://starchart.cc/MaiM-with-u/MaiBot)

diff --git a/bot.py b/bot.py

index bf853bc0..30714e84 100644

--- a/bot.py

+++ b/bot.py

@@ -1,4 +1,5 @@

import asyncio

+import hashlib

import os

import shutil

import sys

@@ -13,8 +14,6 @@ from nonebot.adapters.onebot.v11 import Adapter

import platform

from src.common.logger import get_module_logger

-

-# 配置主程序日志格式

logger = get_module_logger("main_bot")

# 获取没有加载env时的环境变量

@@ -102,7 +101,6 @@ def load_env():

RuntimeError(f"ENVIRONMENT 配置错误,请检查 .env 文件中的 ENVIRONMENT 变量及对应 .env.{env} 是否存在")

-

def scan_provider(env_config: dict):

provider = {}

@@ -165,25 +163,84 @@ async def uvicorn_main():

uvicorn_server = server

await server.serve()

+

def check_eula():

- eula_file = Path("elua.confirmed")

-

- # 如果已经确认过EULA,直接返回

+ eula_confirm_file = Path("eula.confirmed")

+ privacy_confirm_file = Path("privacy.confirmed")

+ eula_file = Path("EULA.md")

+ privacy_file = Path("PRIVACY.md")

+

+ eula_updated = True

+ eula_new_hash = None

+ privacy_updated = True

+ privacy_new_hash = None

+

+ eula_confirmed = False

+ privacy_confirmed = False

+

+ # 首先计算当前EULA文件的哈希值

if eula_file.exists():

+ with open(eula_file, "r", encoding="utf-8") as f:

+ eula_content = f.read()

+ eula_new_hash = hashlib.md5(eula_content.encode("utf-8")).hexdigest()

+ else:

+ logger.error("EULA.md 文件不存在")

+ raise FileNotFoundError("EULA.md 文件不存在")

+

+ # 首先计算当前隐私条款文件的哈希值

+ if privacy_file.exists():

+ with open(privacy_file, "r", encoding="utf-8") as f:

+ privacy_content = f.read()

+ privacy_new_hash = hashlib.md5(privacy_content.encode("utf-8")).hexdigest()

+ else:

+ logger.error("PRIVACY.md 文件不存在")

+ raise FileNotFoundError("PRIVACY.md 文件不存在")

+

+ # 检查EULA确认文件是否存在

+ if eula_confirm_file.exists():

+ with open(eula_confirm_file, "r", encoding="utf-8") as f:

+ confirmed_content = f.read()

+ if eula_new_hash == confirmed_content:

+ eula_confirmed = True

+ eula_updated = False

+ if eula_new_hash == os.getenv("EULA_AGREE"):

+ eula_confirmed = True

+ eula_updated = False

+

+ # 检查隐私条款确认文件是否存在

+ if privacy_confirm_file.exists():

+ with open(privacy_confirm_file, "r", encoding="utf-8") as f:

+ confirmed_content = f.read()

+ if privacy_new_hash == confirmed_content:

+ privacy_confirmed = True

+ privacy_updated = False

+ if privacy_new_hash == os.getenv("PRIVACY_AGREE"):

+ privacy_confirmed = True

+ privacy_updated = False

+

+ # 如果EULA或隐私条款有更新,提示用户重新确认

+ if eula_updated or privacy_updated:

+ print("EULA或隐私条款内容已更新,请在阅读后重新确认,继续运行视为同意更新后的以上两款协议")

+ print(

+ f'输入"同意"或"confirmed"或设置环境变量"EULA_AGREE={eula_new_hash}"和"PRIVACY_AGREE={privacy_new_hash}"继续运行'

+ )

+ while True:

+ user_input = input().strip().lower()

+ if user_input in ["同意", "confirmed"]:

+ # print("确认成功,继续运行")

+ # print(f"确认成功,继续运行{eula_updated} {privacy_updated}")

+ if eula_updated:

+ print(f"更新EULA确认文件{eula_new_hash}")

+ eula_confirm_file.write_text(eula_new_hash, encoding="utf-8")

+ if privacy_updated:

+ print(f"更新隐私条款确认文件{privacy_new_hash}")

+ privacy_confirm_file.write_text(privacy_new_hash, encoding="utf-8")

+ break

+ else:

+ print('请输入"同意"或"confirmed"以继续运行')

+ return

+ elif eula_confirmed and privacy_confirmed:

return

-

- print("使用MaiMBot前请先阅读ELUA协议,继续运行视为同意协议")

- print("协议内容:https://github.com/SengokuCola/MaiMBot/blob/main/EULA.md")

- print('输入"同意"或"confirmed"继续运行')

-

- while True:

- user_input = input().strip().lower() # 转换为小写以忽略大小写

- if user_input in ['同意', 'confirmed']:

- # 创建确认文件

- eula_file.touch()

- break

- else:

- print('请输入"同意"或"confirmed"以继续运行')

def raw_main():

@@ -191,14 +248,14 @@ def raw_main():

# 仅保证行为一致,不依赖 localtime(),实际对生产环境几乎没有作用

if platform.system().lower() != "windows":

time.tzset()

-

+

check_eula()

-

+ print("检查EULA和隐私条款完成")

easter_egg()

init_config()

init_env()

load_env()

-

+

# load_logger()

env_config = {key: os.getenv(key) for key in os.environ}

@@ -230,7 +287,7 @@ if __name__ == "__main__":

app = nonebot.get_asgi()

loop = asyncio.new_event_loop()

asyncio.set_event_loop(loop)

-

+

try:

loop.run_until_complete(uvicorn_main())

except KeyboardInterrupt:

@@ -238,7 +295,7 @@ if __name__ == "__main__":

loop.run_until_complete(graceful_shutdown())

finally:

loop.close()

-

+

except Exception as e:

logger.error(f"主程序异常: {str(e)}")

if loop and not loop.is_closed():

diff --git a/changelog.md b/changelog.md

index 73803d71..6c6b2128 100644

--- a/changelog.md

+++ b/changelog.md

@@ -1,6 +1,182 @@

# Changelog

AI总结

+## [0.6.0] - 2025-3-25

+### 🌟 核心功能增强

+#### 思维流系统(实验性功能)

+- 新增思维流作为实验功能

+- 思维流大核+小核架构

+- 思维流回复意愿模式

+

+#### 记忆系统优化

+- 优化记忆抽取策略

+- 优化记忆prompt结构

+

+#### 关系系统优化

+- 修复relationship_value类型错误

+- 优化关系管理系统

+- 改进关系值计算方式

+

+### 💻 系统架构优化

+#### 配置系统改进

+- 优化配置文件整理

+- 新增分割器功能

+- 新增表情惩罚系数自定义

+- 修复配置文件保存问题

+- 优化配置项管理

+- 新增配置项:

+ - `schedule`: 日程表生成功能配置

+ - `response_spliter`: 回复分割控制

+ - `experimental`: 实验性功能开关

+ - `llm_outer_world`和`llm_sub_heartflow`: 思维流模型配置

+ - `llm_heartflow`: 思维流核心模型配置

+ - `prompt_schedule_gen`: 日程生成提示词配置

+ - `memory_ban_words`: 记忆过滤词配置

+- 优化配置结构:

+ - 调整模型配置组织结构

+ - 优化配置项默认值

+ - 调整配置项顺序

+- 移除冗余配置

+

+#### WebUI改进

+- 新增回复意愿模式选择功能

+- 优化WebUI界面

+- 优化WebUI配置保存机制

+

+#### 部署支持扩展

+- 优化Docker构建流程

+- 完善Windows脚本支持

+- 优化Linux一键安装脚本

+- 新增macOS教程支持

+

+### 🐛 问题修复

+#### 功能稳定性

+- 修复表情包审查器问题

+- 修复心跳发送问题

+- 修复拍一拍消息处理异常

+- 修复日程报错问题

+- 修复文件读写编码问题

+- 修复西文字符分割问题

+- 修复自定义API提供商识别问题

+- 修复人格设置保存问题

+- 修复EULA和隐私政策编码问题

+- 修复cfg变量引用问题

+

+#### 性能优化

+- 提高topic提取效率

+- 优化logger输出格式

+- 优化cmd清理功能

+- 改进LLM使用统计

+- 优化记忆处理效率

+

+### 📚 文档更新

+- 更新README.md内容

+- 添加macOS部署教程

+- 优化文档结构

+- 更新EULA和隐私政策

+- 完善部署文档

+

+### 🔧 其他改进

+- 新增神秘小测验功能

+- 新增人格测评模型

+- 优化表情包审查功能

+- 改进消息转发处理

+- 优化代码风格和格式

+- 完善异常处理机制

+- 优化日志输出格式

+

+### 主要改进方向

+1. 完善思维流系统功能

+2. 优化记忆系统效率

+3. 改进关系系统稳定性

+4. 提升配置系统可用性

+5. 加强WebUI功能

+6. 完善部署文档

+

+

+

+## [0.5.15] - 2025-3-17

+### 🌟 核心功能增强

+#### 关系系统升级

+- 新增关系系统构建与启用功能

+- 优化关系管理系统

+- 改进prompt构建器结构

+- 新增手动修改记忆库的脚本功能

+- 增加alter支持功能

+

+#### 启动器优化

+- 新增MaiLauncher.bat 1.0版本

+- 优化Python和Git环境检测逻辑

+- 添加虚拟环境检查功能

+- 改进工具箱菜单选项

+- 新增分支重置功能

+- 添加MongoDB支持

+- 优化脚本逻辑

+- 修复虚拟环境选项闪退和conda激活问题

+- 修复环境检测菜单闪退问题

+- 修复.env.prod文件复制路径错误

+

+#### 日志系统改进

+- 新增GUI日志查看器

+- 重构日志工厂处理机制

+- 优化日志级别配置

+- 支持环境变量配置日志级别

+- 改进控制台日志输出

+- 优化logger输出格式

+

+### 💻 系统架构优化

+#### 配置系统升级

+- 更新配置文件到0.0.10版本

+- 优化配置文件可视化编辑

+- 新增配置文件版本检测功能

+- 改进配置文件保存机制

+- 修复重复保存可能清空list内容的bug

+- 修复人格设置和其他项配置保存问题

+

+#### WebUI改进

+- 优化WebUI界面和功能

+- 支持安装后管理功能

+- 修复部分文字表述错误

+

+#### 部署支持扩展

+- 优化Docker构建流程

+- 改进MongoDB服务启动逻辑

+- 完善Windows脚本支持

+- 优化Linux一键安装脚本

+- 新增Debian 12专用运行脚本

+

+### 🐛 问题修复

+#### 功能稳定性

+- 修复bot无法识别at对象和reply对象的问题

+- 修复每次从数据库读取额外加0.5的问题

+- 修复新版本由于版本判断不能启动的问题

+- 修复配置文件更新和学习知识库的确认逻辑

+- 优化token统计功能

+- 修复EULA和隐私政策处理时的编码兼容问题

+- 修复文件读写编码问题,统一使用UTF-8

+- 修复颜文字分割问题

+- 修复willing模块cfg变量引用问题

+

+### 📚 文档更新

+- 更新CLAUDE.md为高信息密度项目文档

+- 添加mermaid系统架构图和模块依赖图

+- 添加核心文件索引和类功能表格

+- 添加消息处理流程图

+- 优化文档结构

+- 更新EULA和隐私政策文档

+

+### 🔧 其他改进

+- 更新全球在线数量展示功能

+- 优化statistics输出展示

+- 新增手动修改内存脚本(支持添加、删除和查询节点和边)

+

+### 主要改进方向

+1. 完善关系系统功能

+2. 优化启动器和部署流程

+3. 改进日志系统

+4. 提升配置系统稳定性

+5. 加强文档完整性

+

## [0.5.14] - 2025-3-14

### 🌟 核心功能增强

#### 记忆系统优化

@@ -48,8 +224,6 @@ AI总结

4. 改进日志和错误处理

5. 加强部署文档的完整性

-

-

## [0.5.13] - 2025-3-12

### 🌟 核心功能增强

#### 记忆系统升级

@@ -133,3 +307,4 @@ AI总结

+

diff --git a/changelog_config.md b/changelog_config.md

index c4c56064..92a522a2 100644

--- a/changelog_config.md

+++ b/changelog_config.md

@@ -1,12 +1,32 @@

# Changelog

+## [0.0.11] - 2025-3-12

+### Added

+- 新增了 `schedule` 配置项,用于配置日程表生成功能

+- 新增了 `response_spliter` 配置项,用于控制回复分割

+- 新增了 `experimental` 配置项,用于实验性功能开关

+- 新增了 `llm_outer_world` 和 `llm_sub_heartflow` 模型配置

+- 新增了 `llm_heartflow` 模型配置

+- 在 `personality` 配置项中新增了 `prompt_schedule_gen` 参数

+

+### Changed

+- 优化了模型配置的组织结构

+- 调整了部分配置项的默认值

+- 调整了配置项的顺序,将 `groups` 配置项移到了更靠前的位置

+- 在 `message` 配置项中:

+ - 新增了 `max_response_length` 参数

+- 在 `willing` 配置项中新增了 `emoji_response_penalty` 参数

+- 将 `personality` 配置项中的 `prompt_schedule` 重命名为 `prompt_schedule_gen`

+

+### Removed

+- 移除了 `min_text_length` 配置项

+- 移除了 `cq_code` 配置项

+- 移除了 `others` 配置项(其功能已整合到 `experimental` 中)

+

## [0.0.5] - 2025-3-11

### Added

- 新增了 `alias_names` 配置项,用于指定麦麦的别名。

## [0.0.4] - 2025-3-9

### Added

-- 新增了 `memory_ban_words` 配置项,用于指定不希望记忆的词汇。

-

-

-

+- 新增了 `memory_ban_words` 配置项,用于指定不希望记忆的词汇。

\ No newline at end of file

diff --git a/config/auto_update.py b/config/auto_update.py

index d87b7c12..a0d87852 100644

--- a/config/auto_update.py

+++ b/config/auto_update.py

@@ -3,34 +3,35 @@ import shutil

import tomlkit

from pathlib import Path

+

def update_config():

# 获取根目录路径

root_dir = Path(__file__).parent.parent

template_dir = root_dir / "template"

config_dir = root_dir / "config"

-

+

# 定义文件路径

template_path = template_dir / "bot_config_template.toml"

old_config_path = config_dir / "bot_config.toml"

new_config_path = config_dir / "bot_config.toml"

-

+

# 读取旧配置文件

old_config = {}

if old_config_path.exists():

with open(old_config_path, "r", encoding="utf-8") as f:

old_config = tomlkit.load(f)

-

+

# 删除旧的配置文件

if old_config_path.exists():

os.remove(old_config_path)

-

+

# 复制模板文件到配置目录

shutil.copy2(template_path, new_config_path)

-

+

# 读取新配置文件

with open(new_config_path, "r", encoding="utf-8") as f:

new_config = tomlkit.load(f)

-

+

# 递归更新配置

def update_dict(target, source):

for key, value in source.items():

@@ -55,13 +56,14 @@ def update_config():

except (TypeError, ValueError):

# 如果转换失败,直接赋值

target[key] = value

-

+

# 将旧配置的值更新到新配置中

update_dict(new_config, old_config)

-

+

# 保存更新后的配置(保留注释和格式)

with open(new_config_path, "w", encoding="utf-8") as f:

f.write(tomlkit.dumps(new_config))

+

if __name__ == "__main__":

update_config()

diff --git a/docs/docker_deploy.md b/docs/docker_deploy.md

index f78f73dc..38eb5444 100644

--- a/docs/docker_deploy.md

+++ b/docs/docker_deploy.md

@@ -41,7 +41,7 @@ NAPCAT_UID=$(id -u) NAPCAT_GID=$(id -g) docker-compose up -d

### 3. 修改配置并重启Docker

-- 请前往 [🎀 新手配置指南](docs/installation_cute.md) 或 [⚙️ 标准配置指南](docs/installation_standard.md) 完成`.env.prod`与`bot_config.toml`配置文件的编写\

+- 请前往 [🎀 新手配置指南](./installation_cute.md) 或 [⚙️ 标准配置指南](./installation_standard.md) 完成`.env.prod`与`bot_config.toml`配置文件的编写\

**需要注意`.env.prod`中HOST处IP的填写,Docker中部署和系统中直接安装的配置会有所不同**

- 重启Docker容器:

diff --git a/docs/fast_q_a.md b/docs/fast_q_a.md

index 3b995e24..abec69b4 100644

--- a/docs/fast_q_a.md

+++ b/docs/fast_q_a.md

@@ -1,113 +1,62 @@

## 快速更新Q&A❓

-

+**也感谢每一位给麦麦发展提出宝贵意见与建议的用户,感谢陪伴麦麦走到现在的你们**

+

## Stargazers over time

-[](https://starchart.cc/SengokuCola/MaiMBot)

+[](https://starchart.cc/MaiM-with-u/MaiBot)

diff --git a/bot.py b/bot.py

index bf853bc0..30714e84 100644

--- a/bot.py

+++ b/bot.py

@@ -1,4 +1,5 @@

import asyncio

+import hashlib

import os

import shutil

import sys

@@ -13,8 +14,6 @@ from nonebot.adapters.onebot.v11 import Adapter

import platform

from src.common.logger import get_module_logger

-

-# 配置主程序日志格式

logger = get_module_logger("main_bot")

# 获取没有加载env时的环境变量

@@ -102,7 +101,6 @@ def load_env():

RuntimeError(f"ENVIRONMENT 配置错误,请检查 .env 文件中的 ENVIRONMENT 变量及对应 .env.{env} 是否存在")

-

def scan_provider(env_config: dict):

provider = {}

@@ -165,25 +163,84 @@ async def uvicorn_main():

uvicorn_server = server

await server.serve()

+

def check_eula():

- eula_file = Path("elua.confirmed")

-

- # 如果已经确认过EULA,直接返回

+ eula_confirm_file = Path("eula.confirmed")

+ privacy_confirm_file = Path("privacy.confirmed")

+ eula_file = Path("EULA.md")

+ privacy_file = Path("PRIVACY.md")

+

+ eula_updated = True

+ eula_new_hash = None

+ privacy_updated = True

+ privacy_new_hash = None

+

+ eula_confirmed = False

+ privacy_confirmed = False

+

+ # 首先计算当前EULA文件的哈希值

if eula_file.exists():

+ with open(eula_file, "r", encoding="utf-8") as f:

+ eula_content = f.read()

+ eula_new_hash = hashlib.md5(eula_content.encode("utf-8")).hexdigest()

+ else:

+ logger.error("EULA.md 文件不存在")

+ raise FileNotFoundError("EULA.md 文件不存在")

+

+ # 首先计算当前隐私条款文件的哈希值

+ if privacy_file.exists():

+ with open(privacy_file, "r", encoding="utf-8") as f:

+ privacy_content = f.read()

+ privacy_new_hash = hashlib.md5(privacy_content.encode("utf-8")).hexdigest()

+ else:

+ logger.error("PRIVACY.md 文件不存在")

+ raise FileNotFoundError("PRIVACY.md 文件不存在")

+

+ # 检查EULA确认文件是否存在

+ if eula_confirm_file.exists():

+ with open(eula_confirm_file, "r", encoding="utf-8") as f:

+ confirmed_content = f.read()

+ if eula_new_hash == confirmed_content:

+ eula_confirmed = True

+ eula_updated = False

+ if eula_new_hash == os.getenv("EULA_AGREE"):

+ eula_confirmed = True

+ eula_updated = False

+

+ # 检查隐私条款确认文件是否存在

+ if privacy_confirm_file.exists():

+ with open(privacy_confirm_file, "r", encoding="utf-8") as f:

+ confirmed_content = f.read()

+ if privacy_new_hash == confirmed_content:

+ privacy_confirmed = True

+ privacy_updated = False

+ if privacy_new_hash == os.getenv("PRIVACY_AGREE"):

+ privacy_confirmed = True

+ privacy_updated = False

+

+ # 如果EULA或隐私条款有更新,提示用户重新确认

+ if eula_updated or privacy_updated:

+ print("EULA或隐私条款内容已更新,请在阅读后重新确认,继续运行视为同意更新后的以上两款协议")

+ print(

+ f'输入"同意"或"confirmed"或设置环境变量"EULA_AGREE={eula_new_hash}"和"PRIVACY_AGREE={privacy_new_hash}"继续运行'

+ )

+ while True:

+ user_input = input().strip().lower()

+ if user_input in ["同意", "confirmed"]:

+ # print("确认成功,继续运行")

+ # print(f"确认成功,继续运行{eula_updated} {privacy_updated}")

+ if eula_updated:

+ print(f"更新EULA确认文件{eula_new_hash}")

+ eula_confirm_file.write_text(eula_new_hash, encoding="utf-8")

+ if privacy_updated:

+ print(f"更新隐私条款确认文件{privacy_new_hash}")

+ privacy_confirm_file.write_text(privacy_new_hash, encoding="utf-8")

+ break

+ else:

+ print('请输入"同意"或"confirmed"以继续运行')

+ return

+ elif eula_confirmed and privacy_confirmed:

return

-

- print("使用MaiMBot前请先阅读ELUA协议,继续运行视为同意协议")

- print("协议内容:https://github.com/SengokuCola/MaiMBot/blob/main/EULA.md")

- print('输入"同意"或"confirmed"继续运行')

-

- while True:

- user_input = input().strip().lower() # 转换为小写以忽略大小写

- if user_input in ['同意', 'confirmed']:

- # 创建确认文件

- eula_file.touch()

- break

- else:

- print('请输入"同意"或"confirmed"以继续运行')

def raw_main():

@@ -191,14 +248,14 @@ def raw_main():

# 仅保证行为一致,不依赖 localtime(),实际对生产环境几乎没有作用

if platform.system().lower() != "windows":

time.tzset()

-

+

check_eula()

-

+ print("检查EULA和隐私条款完成")

easter_egg()

init_config()

init_env()

load_env()

-

+

# load_logger()

env_config = {key: os.getenv(key) for key in os.environ}

@@ -230,7 +287,7 @@ if __name__ == "__main__":

app = nonebot.get_asgi()

loop = asyncio.new_event_loop()

asyncio.set_event_loop(loop)

-

+

try:

loop.run_until_complete(uvicorn_main())

except KeyboardInterrupt:

@@ -238,7 +295,7 @@ if __name__ == "__main__":

loop.run_until_complete(graceful_shutdown())

finally:

loop.close()

-

+

except Exception as e:

logger.error(f"主程序异常: {str(e)}")

if loop and not loop.is_closed():

diff --git a/changelog.md b/changelog.md

index 73803d71..6c6b2128 100644

--- a/changelog.md

+++ b/changelog.md

@@ -1,6 +1,182 @@

# Changelog

AI总结

+## [0.6.0] - 2025-3-25

+### 🌟 核心功能增强

+#### 思维流系统(实验性功能)

+- 新增思维流作为实验功能

+- 思维流大核+小核架构

+- 思维流回复意愿模式

+

+#### 记忆系统优化

+- 优化记忆抽取策略

+- 优化记忆prompt结构

+

+#### 关系系统优化

+- 修复relationship_value类型错误

+- 优化关系管理系统

+- 改进关系值计算方式

+

+### 💻 系统架构优化

+#### 配置系统改进

+- 优化配置文件整理

+- 新增分割器功能

+- 新增表情惩罚系数自定义

+- 修复配置文件保存问题

+- 优化配置项管理

+- 新增配置项:

+ - `schedule`: 日程表生成功能配置

+ - `response_spliter`: 回复分割控制

+ - `experimental`: 实验性功能开关

+ - `llm_outer_world`和`llm_sub_heartflow`: 思维流模型配置

+ - `llm_heartflow`: 思维流核心模型配置

+ - `prompt_schedule_gen`: 日程生成提示词配置

+ - `memory_ban_words`: 记忆过滤词配置

+- 优化配置结构:

+ - 调整模型配置组织结构

+ - 优化配置项默认值

+ - 调整配置项顺序

+- 移除冗余配置

+

+#### WebUI改进

+- 新增回复意愿模式选择功能

+- 优化WebUI界面

+- 优化WebUI配置保存机制

+

+#### 部署支持扩展

+- 优化Docker构建流程

+- 完善Windows脚本支持

+- 优化Linux一键安装脚本

+- 新增macOS教程支持

+

+### 🐛 问题修复

+#### 功能稳定性

+- 修复表情包审查器问题

+- 修复心跳发送问题

+- 修复拍一拍消息处理异常

+- 修复日程报错问题

+- 修复文件读写编码问题

+- 修复西文字符分割问题

+- 修复自定义API提供商识别问题

+- 修复人格设置保存问题

+- 修复EULA和隐私政策编码问题

+- 修复cfg变量引用问题

+

+#### 性能优化

+- 提高topic提取效率

+- 优化logger输出格式

+- 优化cmd清理功能

+- 改进LLM使用统计

+- 优化记忆处理效率

+

+### 📚 文档更新

+- 更新README.md内容

+- 添加macOS部署教程

+- 优化文档结构

+- 更新EULA和隐私政策

+- 完善部署文档

+

+### 🔧 其他改进

+- 新增神秘小测验功能

+- 新增人格测评模型

+- 优化表情包审查功能

+- 改进消息转发处理

+- 优化代码风格和格式

+- 完善异常处理机制

+- 优化日志输出格式

+

+### 主要改进方向

+1. 完善思维流系统功能

+2. 优化记忆系统效率

+3. 改进关系系统稳定性

+4. 提升配置系统可用性

+5. 加强WebUI功能

+6. 完善部署文档

+

+

+

+## [0.5.15] - 2025-3-17

+### 🌟 核心功能增强

+#### 关系系统升级

+- 新增关系系统构建与启用功能

+- 优化关系管理系统

+- 改进prompt构建器结构

+- 新增手动修改记忆库的脚本功能

+- 增加alter支持功能

+

+#### 启动器优化

+- 新增MaiLauncher.bat 1.0版本

+- 优化Python和Git环境检测逻辑

+- 添加虚拟环境检查功能

+- 改进工具箱菜单选项

+- 新增分支重置功能

+- 添加MongoDB支持

+- 优化脚本逻辑

+- 修复虚拟环境选项闪退和conda激活问题

+- 修复环境检测菜单闪退问题

+- 修复.env.prod文件复制路径错误

+

+#### 日志系统改进

+- 新增GUI日志查看器

+- 重构日志工厂处理机制

+- 优化日志级别配置

+- 支持环境变量配置日志级别

+- 改进控制台日志输出

+- 优化logger输出格式

+

+### 💻 系统架构优化

+#### 配置系统升级

+- 更新配置文件到0.0.10版本

+- 优化配置文件可视化编辑

+- 新增配置文件版本检测功能

+- 改进配置文件保存机制

+- 修复重复保存可能清空list内容的bug

+- 修复人格设置和其他项配置保存问题

+

+#### WebUI改进

+- 优化WebUI界面和功能

+- 支持安装后管理功能

+- 修复部分文字表述错误

+

+#### 部署支持扩展

+- 优化Docker构建流程

+- 改进MongoDB服务启动逻辑

+- 完善Windows脚本支持

+- 优化Linux一键安装脚本

+- 新增Debian 12专用运行脚本

+

+### 🐛 问题修复

+#### 功能稳定性

+- 修复bot无法识别at对象和reply对象的问题

+- 修复每次从数据库读取额外加0.5的问题

+- 修复新版本由于版本判断不能启动的问题

+- 修复配置文件更新和学习知识库的确认逻辑

+- 优化token统计功能

+- 修复EULA和隐私政策处理时的编码兼容问题

+- 修复文件读写编码问题,统一使用UTF-8

+- 修复颜文字分割问题

+- 修复willing模块cfg变量引用问题

+

+### 📚 文档更新

+- 更新CLAUDE.md为高信息密度项目文档

+- 添加mermaid系统架构图和模块依赖图

+- 添加核心文件索引和类功能表格

+- 添加消息处理流程图

+- 优化文档结构

+- 更新EULA和隐私政策文档

+

+### 🔧 其他改进

+- 更新全球在线数量展示功能

+- 优化statistics输出展示

+- 新增手动修改内存脚本(支持添加、删除和查询节点和边)

+

+### 主要改进方向

+1. 完善关系系统功能

+2. 优化启动器和部署流程

+3. 改进日志系统

+4. 提升配置系统稳定性

+5. 加强文档完整性

+

## [0.5.14] - 2025-3-14

### 🌟 核心功能增强

#### 记忆系统优化

@@ -48,8 +224,6 @@ AI总结

4. 改进日志和错误处理

5. 加强部署文档的完整性

-

-

## [0.5.13] - 2025-3-12

### 🌟 核心功能增强

#### 记忆系统升级

@@ -133,3 +307,4 @@ AI总结

+

diff --git a/changelog_config.md b/changelog_config.md

index c4c56064..92a522a2 100644

--- a/changelog_config.md

+++ b/changelog_config.md

@@ -1,12 +1,32 @@

# Changelog

+## [0.0.11] - 2025-3-12

+### Added

+- 新增了 `schedule` 配置项,用于配置日程表生成功能

+- 新增了 `response_spliter` 配置项,用于控制回复分割

+- 新增了 `experimental` 配置项,用于实验性功能开关

+- 新增了 `llm_outer_world` 和 `llm_sub_heartflow` 模型配置

+- 新增了 `llm_heartflow` 模型配置

+- 在 `personality` 配置项中新增了 `prompt_schedule_gen` 参数

+

+### Changed

+- 优化了模型配置的组织结构

+- 调整了部分配置项的默认值

+- 调整了配置项的顺序,将 `groups` 配置项移到了更靠前的位置

+- 在 `message` 配置项中:

+ - 新增了 `max_response_length` 参数

+- 在 `willing` 配置项中新增了 `emoji_response_penalty` 参数

+- 将 `personality` 配置项中的 `prompt_schedule` 重命名为 `prompt_schedule_gen`

+

+### Removed

+- 移除了 `min_text_length` 配置项

+- 移除了 `cq_code` 配置项

+- 移除了 `others` 配置项(其功能已整合到 `experimental` 中)

+

## [0.0.5] - 2025-3-11

### Added

- 新增了 `alias_names` 配置项,用于指定麦麦的别名。

## [0.0.4] - 2025-3-9

### Added

-- 新增了 `memory_ban_words` 配置项,用于指定不希望记忆的词汇。

-

-

-

+- 新增了 `memory_ban_words` 配置项,用于指定不希望记忆的词汇。

\ No newline at end of file

diff --git a/config/auto_update.py b/config/auto_update.py

index d87b7c12..a0d87852 100644

--- a/config/auto_update.py

+++ b/config/auto_update.py

@@ -3,34 +3,35 @@ import shutil

import tomlkit

from pathlib import Path

+

def update_config():

# 获取根目录路径

root_dir = Path(__file__).parent.parent

template_dir = root_dir / "template"

config_dir = root_dir / "config"

-

+

# 定义文件路径

template_path = template_dir / "bot_config_template.toml"

old_config_path = config_dir / "bot_config.toml"

new_config_path = config_dir / "bot_config.toml"

-

+

# 读取旧配置文件

old_config = {}

if old_config_path.exists():

with open(old_config_path, "r", encoding="utf-8") as f:

old_config = tomlkit.load(f)

-

+

# 删除旧的配置文件

if old_config_path.exists():

os.remove(old_config_path)

-

+

# 复制模板文件到配置目录

shutil.copy2(template_path, new_config_path)

-

+

# 读取新配置文件

with open(new_config_path, "r", encoding="utf-8") as f:

new_config = tomlkit.load(f)

-

+

# 递归更新配置

def update_dict(target, source):

for key, value in source.items():

@@ -55,13 +56,14 @@ def update_config():

except (TypeError, ValueError):

# 如果转换失败,直接赋值

target[key] = value

-

+

# 将旧配置的值更新到新配置中

update_dict(new_config, old_config)

-

+

# 保存更新后的配置(保留注释和格式)

with open(new_config_path, "w", encoding="utf-8") as f:

f.write(tomlkit.dumps(new_config))

+

if __name__ == "__main__":

update_config()

diff --git a/docs/docker_deploy.md b/docs/docker_deploy.md

index f78f73dc..38eb5444 100644

--- a/docs/docker_deploy.md

+++ b/docs/docker_deploy.md

@@ -41,7 +41,7 @@ NAPCAT_UID=$(id -u) NAPCAT_GID=$(id -g) docker-compose up -d

### 3. 修改配置并重启Docker

-- 请前往 [🎀 新手配置指南](docs/installation_cute.md) 或 [⚙️ 标准配置指南](docs/installation_standard.md) 完成`.env.prod`与`bot_config.toml`配置文件的编写\

+- 请前往 [🎀 新手配置指南](./installation_cute.md) 或 [⚙️ 标准配置指南](./installation_standard.md) 完成`.env.prod`与`bot_config.toml`配置文件的编写\

**需要注意`.env.prod`中HOST处IP的填写,Docker中部署和系统中直接安装的配置会有所不同**

- 重启Docker容器:

diff --git a/docs/fast_q_a.md b/docs/fast_q_a.md

index 3b995e24..abec69b4 100644

--- a/docs/fast_q_a.md

+++ b/docs/fast_q_a.md

@@ -1,113 +1,62 @@

## 快速更新Q&A❓

-

- - 这个文件用来记录一些常见的新手问题。 -

- ### 完整安装教程 -

- [MaiMbot简易配置教程](https://www.bilibili.com/video/BV1zsQ5YCEE6) -

- ### Api相关问题 -

- -

- - 为什么显示:"缺失必要的API KEY" ❓ -

- + -

- -

-

----

-

-

-

-

----

-

-

- ->

-> ->你需要在 [Silicon Flow Api](https://cloud.siliconflow.cn/account/ak) ->网站上注册一个账号,然后点击这个链接打开API KEY获取页面。 +>你需要在 [Silicon Flow Api](https://cloud.siliconflow.cn/account/ak) 网站上注册一个账号,然后点击这个链接打开API KEY获取页面。 > >点击 "新建API密钥" 按钮新建一个给MaiMBot使用的API KEY。不要忘了点击复制。 > >之后打开MaiMBot在你电脑上的文件根目录,使用记事本或者其他文本编辑器打开 [.env.prod](../.env.prod) ->这个文件。把你刚才复制的API KEY填入到 "SILICONFLOW_KEY=" 这个等号的右边。 +>这个文件。把你刚才复制的API KEY填入到 `SILICONFLOW_KEY=` 这个等号的右边。 > >在默认情况下,MaiMBot使用的默认Api都是硅基流动的。 -> ->

- -

- -

+--- - 我想使用硅基流动之外的Api网站,我应该怎么做 ❓ ---- - -

- ->

-> >你需要使用记事本或者其他文本编辑器打开config目录下的 [bot_config.toml](../config/bot_config.toml) ->然后修改其中的 "provider = " 字段。同时不要忘记模仿 [.env.prod](../.env.prod) ->文件的写法添加 Api Key 和 Base URL。 > ->举个例子,如果你写了 " provider = \"ABC\" ",那你需要相应的在 [.env.prod](../.env.prod) ->文件里添加形如 " ABC_BASE_URL = https://api.abc.com/v1 " 和 " ABC_KEY = sk-1145141919810 " 的字段。 +>然后修改其中的 `provider = ` 字段。同时不要忘记模仿 [.env.prod](../.env.prod) 文件的写法添加 Api Key 和 Base URL。 > ->**如果你对AI没有较深的了解,修改识图模型和嵌入模型的provider字段可能会产生bug,因为你从Api网站调用了一个并不存在的模型** +>举个例子,如果你写了 `provider = "ABC"`,那你需要相应的在 [.env.prod](../.env.prod) 文件里添加形如 `ABC_BASE_URL = https://api.abc.com/v1` 和 `ABC_KEY = sk-1145141919810` 的字段。 > ->这个时候,你需要把字段的值改回 "provider = \"SILICONFLOW\" " 以此解决bug。 +>**如果你对AI模型没有较深的了解,修改识图模型和嵌入模型的provider字段可能会产生bug,因为你从Api网站调用了一个并不存在的模型** > ->

- - -

+>这个时候,你需要把字段的值改回 `provider = "SILICONFLOW"` 以此解决此问题。 ### MongoDB相关问题 -

- - 我应该怎么清空bot内存储的表情包 ❓ +>需要先安装`MongoDB Compass`,[下载链接](https://www.mongodb.com/try/download/compass),软件支持`macOS、Windows、Ubuntu、Redhat`系统 +>以Windows为例,保持如图所示选项,点击`Download`即可,如果是其他系统,请在`Platform`中自行选择: +> ----

-

-

----

-

-

- ->

-> >打开你的MongoDB Compass软件,你会在左上角看到这样的一个界面: > ->

-> -> +>

+> >

>

>

>

> >点击 "CONNECT" 之后,点击展开 MegBot 标签栏 > ->

-> -> +>

+> >

>

>

>

> >点进 "emoji" 再点击 "DELETE" 删掉所有条目,如图所示 > ->

-> -> +>

+> >

>

>

>

> @@ -116,34 +65,225 @@ >MaiMBot的所有图片均储存在 [data](../data) 文件夹内,按类型分为 [emoji](../data/emoji) 和 [image](../data/image) > >在删除服务器数据时不要忘记清空这些图片。 -> ->

- -

- -- 为什么我连接不上MongoDB服务器 ❓ --- +- 为什么我连接不上MongoDB服务器 ❓ ->

-> >这个问题比较复杂,但是你可以按照下面的步骤检查,看看具体是什么问题 -> ->

-> + + +>#### Windows > 1. 检查有没有把 mongod.exe 所在的目录添加到 path。 具体可参照 > ->

-> > [CSDN-windows10设置环境变量Path详细步骤](https://blog.csdn.net/flame_007/article/details/106401215) > ->

-> > **需要往path里填入的是 exe 所在的完整目录!不带 exe 本体** > >

> -> 2. 待完成 +> 2. 环境变量添加完之后,可以按下`WIN+R`,在弹出的小框中输入`powershell`,回车,进入到powershell界面后,输入`mongod --version`如果有输出信息,就说明你的环境变量添加成功了。 +> 接下来,直接输入`mongod --port 27017`命令(`--port`指定了端口,方便在可视化界面中连接),如果连不上,很大可能会出现 +>```shell +>"error":"NonExistentPath: Data directory \\data\\db not found. Create the missing directory or specify another path using (1) the --dbpath command line option, or (2) by adding the 'storage.dbPath' option in the configuration file." +>``` +>这是因为你的C盘下没有`data\db`文件夹,mongo不知道将数据库文件存放在哪,不过不建议在C盘中添加,因为这样你的C盘负担会很大,可以通过`mongod --dbpath=PATH --port 27017`来执行,将`PATH`替换成你的自定义文件夹,但是不要放在mongodb的bin文件夹下!例如,你可以在D盘中创建一个mongodata文件夹,然后命令这样写 +>```shell +>mongod --dbpath=D:\mongodata --port 27017 +>``` > ->

\ No newline at end of file +>如果还是不行,有可能是因为你的27017端口被占用了 +>通过命令 +>```shell +> netstat -ano | findstr :27017 +>``` +>可以查看当前端口是否被占用,如果有输出,其一般的格式是这样的 +>```shell +> TCP 127.0.0.1:27017 0.0.0.0:0 LISTENING 5764 +> TCP 127.0.0.1:27017 127.0.0.1:63387 ESTABLISHED 5764 +> TCP 127.0.0.1:27017 127.0.0.1:63388 ESTABLISHED 5764 +> TCP 127.0.0.1:27017 127.0.0.1:63389 ESTABLISHED 5764 +>``` +>最后那个数字就是PID,通过以下命令查看是哪些进程正在占用 +>```shell +>tasklist /FI "PID eq 5764" +>``` +>如果是无关紧要的进程,可以通过`taskkill`命令关闭掉它,例如`Taskkill /F /PID 5764` +> +>如果你对命令行实在不熟悉,可以通过`Ctrl+Shift+Esc`调出任务管理器,在搜索框中输入PID,也可以找到相应的进程。 +> +>如果你害怕关掉重要进程,可以修改`.env.dev`中的`MONGODB_PORT`为其它值,并在启动时同时修改`--port`参数为一样的值 +>```ini +>MONGODB_HOST=127.0.0.1 +>MONGODB_PORT=27017 #修改这里 +>DATABASE_NAME=MegBot +>``` + +

+

+ Label

+ com.maimbot

+

+ ProgramArguments

+

+ /path/to/maimbot-venv/bin/python

+ /path/to/MaiMbot/bot.py

+

+

+ WorkingDirectory

+ /path/to/MaiMbot

+

+ StandardOutPath

+ /tmp/maimbot.log

+ StandardErrorPath

+ /tmp/maimbot.err

+

+ RunAtLoad

+ KeepAlive

+

+

+```

+

+加载服务:

+

+```bash

+launchctl load ~/Library/LaunchAgents/com.maimbot.plist

+launchctl start com.maimbot

+```

+

+查看日志:

+

+```bash

+tail -f /tmp/maimbot.log

+```

+

+---

+

+## 常见问题处理

+

+1. **权限问题**

+```bash

+# 遇到文件权限错误时

+chmod -R 755 ~/Documents/MaiMbot

+```

+

+2. **Python模块缺失**

+```bash

+# 确保在虚拟环境中

+source maimbot-venv/bin/activate # 或 conda 激活

+pip install --force-reinstall -r requirements.txt

+```

+

+3. **MongoDB连接失败**

+```bash

+# 检查服务状态

+brew services list

+# 重置数据库权限

+mongosh --eval "db.adminCommand({setFeatureCompatibilityVersion: '5.0'})"

+```

+

+---

+

+## 系统优化建议

+

+1. **关闭App Nap**

+```bash

+# 防止系统休眠NapCat进程

+defaults write NSGlobalDomain NSAppSleepDisabled -bool YES

+```

+

+2. **电源管理设置**

+```bash

+# 防止睡眠影响机器人运行

+sudo systemsetup -setcomputersleep Never

+```

+

+---

diff --git a/docs/API_KEY.png b/docs/pic/API_KEY.png

similarity index 100%

rename from docs/API_KEY.png

rename to docs/pic/API_KEY.png

diff --git a/docs/MONGO_DB_0.png b/docs/pic/MONGO_DB_0.png

similarity index 100%

rename from docs/MONGO_DB_0.png

rename to docs/pic/MONGO_DB_0.png

diff --git a/docs/MONGO_DB_1.png b/docs/pic/MONGO_DB_1.png

similarity index 100%

rename from docs/MONGO_DB_1.png

rename to docs/pic/MONGO_DB_1.png

diff --git a/docs/MONGO_DB_2.png b/docs/pic/MONGO_DB_2.png

similarity index 100%

rename from docs/MONGO_DB_2.png

rename to docs/pic/MONGO_DB_2.png

diff --git a/docs/pic/MongoDB_Ubuntu_guide.png b/docs/pic/MongoDB_Ubuntu_guide.png

new file mode 100644

index 00000000..abd47c28

Binary files /dev/null and b/docs/pic/MongoDB_Ubuntu_guide.png differ

diff --git a/docs/pic/QQ_Download_guide_Linux.png b/docs/pic/QQ_Download_guide_Linux.png

new file mode 100644

index 00000000..1d47e9d2

Binary files /dev/null and b/docs/pic/QQ_Download_guide_Linux.png differ

diff --git a/docs/pic/compass_downloadguide.png b/docs/pic/compass_downloadguide.png

new file mode 100644

index 00000000..06a08b52

Binary files /dev/null and b/docs/pic/compass_downloadguide.png differ

diff --git a/docs/pic/linux_beginner_downloadguide.png b/docs/pic/linux_beginner_downloadguide.png

new file mode 100644

index 00000000..4c6fbf01

Binary files /dev/null and b/docs/pic/linux_beginner_downloadguide.png differ

diff --git a/docs/synology_.env.prod.png b/docs/pic/synology_.env.prod.png

similarity index 100%

rename from docs/synology_.env.prod.png

rename to docs/pic/synology_.env.prod.png

diff --git a/docs/synology_create_project.png b/docs/pic/synology_create_project.png

similarity index 100%

rename from docs/synology_create_project.png

rename to docs/pic/synology_create_project.png

diff --git a/docs/synology_docker-compose.png b/docs/pic/synology_docker-compose.png

similarity index 100%

rename from docs/synology_docker-compose.png

rename to docs/pic/synology_docker-compose.png

diff --git a/docs/synology_how_to_download.png b/docs/pic/synology_how_to_download.png

similarity index 100%

rename from docs/synology_how_to_download.png

rename to docs/pic/synology_how_to_download.png

diff --git a/docs/video.png b/docs/pic/video.png

similarity index 100%

rename from docs/video.png

rename to docs/pic/video.png

diff --git a/docs/synology_deploy.md b/docs/synology_deploy.md

index a7b3bebd..1139101e 100644

--- a/docs/synology_deploy.md

+++ b/docs/synology_deploy.md

@@ -16,7 +16,7 @@

docker-compose.yml: https://github.com/SengokuCola/MaiMBot/blob/main/docker-compose.yml

下载后打开,将 `services-mongodb-image` 修改为 `mongo:4.4.24`。这是因为最新的 MongoDB 强制要求 AVX 指令集,而群晖似乎不支持这个指令集

-

+

bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_config_template.toml

下载后,重命名为 `bot_config.toml`

@@ -26,13 +26,13 @@ bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_c

下载后,重命名为 `.env.prod`

将 `HOST` 修改为 `0.0.0.0`,确保 maimbot 能被 napcat 访问

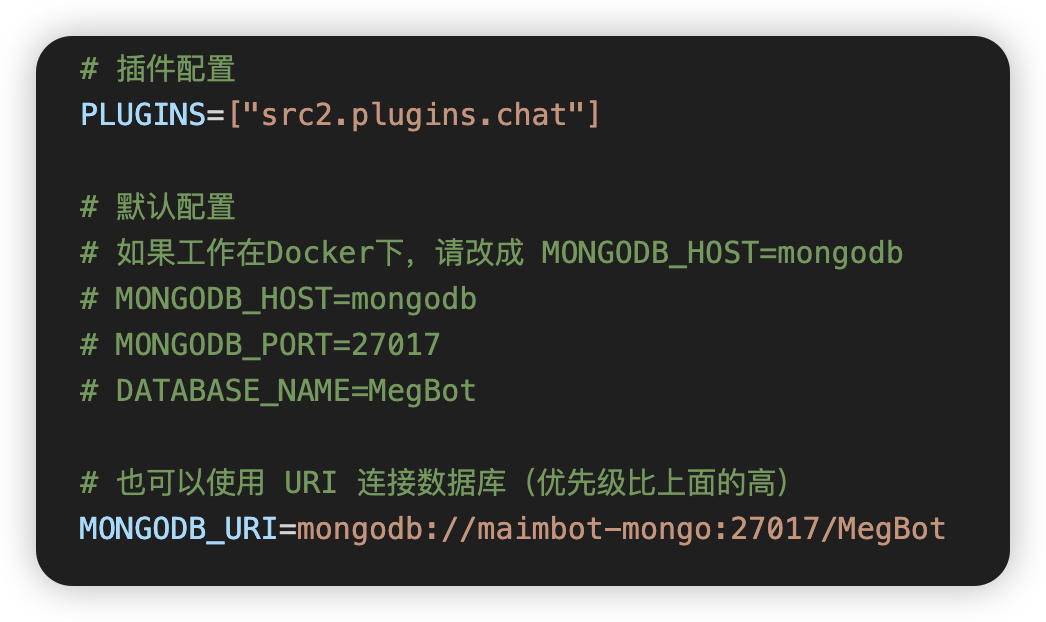

按下图修改 mongodb 设置,使用 `MONGODB_URI`

-

+

把 `bot_config.toml` 和 `.env.prod` 放入之前创建的 `MaiMBot`文件夹

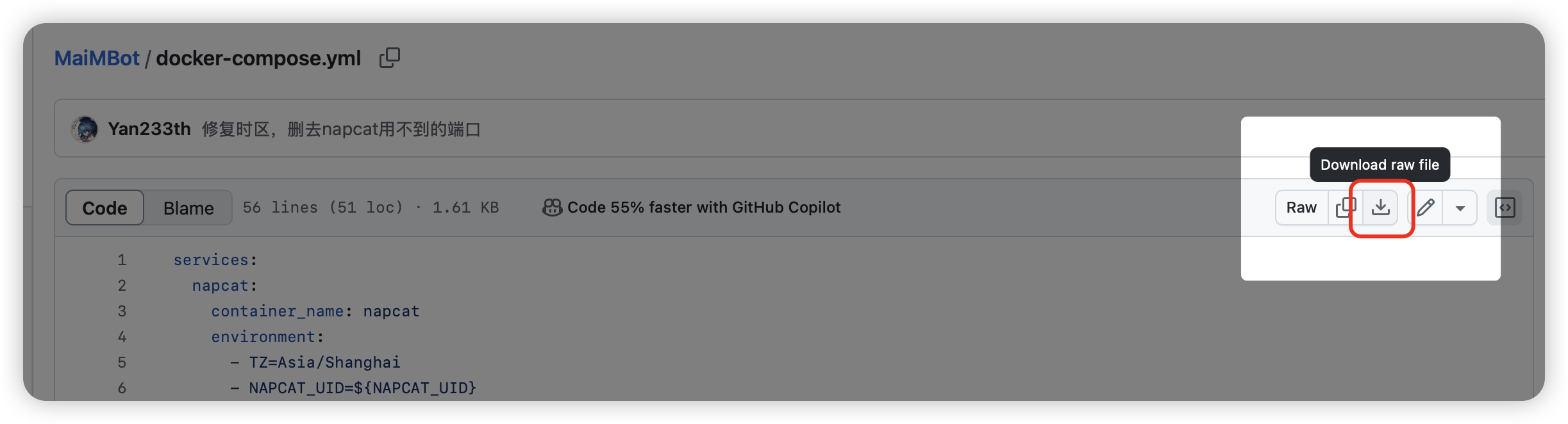

#### 如何下载?

-点这里!

+点这里!

### 创建项目

@@ -45,7 +45,7 @@ bot_config.toml: https://github.com/SengokuCola/MaiMBot/blob/main/template/bot_c

图例:

-

+

一路点下一步,等待项目创建完成

diff --git a/emoji_reviewer.py b/emoji_reviewer.py

new file mode 100644

index 00000000..796cb8ef

--- /dev/null

+++ b/emoji_reviewer.py

@@ -0,0 +1,382 @@

+import json

+import re

+import warnings

+import gradio as gr

+import os

+import signal

+import sys

+import requests

+import tomli

+

+from dotenv import load_dotenv

+from src.common.database import db

+

+try:

+ from src.common.logger import get_module_logger

+

+ logger = get_module_logger("emoji_reviewer")

+except ImportError:

+ from loguru import logger

+

+ # 检查并创建日志目录

+ log_dir = "logs/emoji_reviewer"

+ if not os.path.exists(log_dir):

+ os.makedirs(log_dir, exist_ok=True)

+ # 配置控制台输出格式

+ logger.remove() # 移除默认的处理器

+ logger.add(sys.stderr, format="{time:MM-DD HH:mm} | emoji_reviewer | {message}") # 添加控制台输出

+ logger.add(

+ "logs/emoji_reviewer/{time:YYYY-MM-DD}.log",

+ rotation="00:00",

+ format="{time:MM-DD HH:mm} | emoji_reviewer | {message}"

+ )

+ logger.warning("检测到src.common.logger并未导入,将使用默认loguru作为日志记录器")

+ logger.warning("如果你是用的是低版本(0.5.13)麦麦,请忽略此警告")

+# 忽略 gradio 版本警告

+warnings.filterwarnings("ignore", message="IMPORTANT: You are using gradio version.*")

+

+root_dir = os.path.dirname(os.path.abspath(__file__))

+bot_config_path = os.path.join(root_dir, "config/bot_config.toml")

+if os.path.exists(bot_config_path):

+ with open(bot_config_path, "rb") as f:

+ try:

+ toml_dict = tomli.load(f)

+ embedding_config = toml_dict['model']['embedding']

+ embedding_name = embedding_config["name"]

+ embedding_provider = embedding_config["provider"]

+ except tomli.TOMLDecodeError as e:

+ logger.critical(f"配置文件bot_config.toml填写有误,请检查第{e.lineno}行第{e.colno}处:{e.msg}")

+ exit(1)

+ except KeyError:

+ logger.critical("配置文件bot_config.toml缺少model.embedding设置,请补充后再编辑表情包")

+ exit(1)

+else:

+ logger.critical(f"没有找到配置文件{bot_config_path}")

+ exit(1)

+env_path = os.path.join(root_dir, ".env.prod")

+if not os.path.exists(env_path):

+ logger.critical(f"没有找到环境变量文件{env_path}")

+ exit(1)

+load_dotenv(env_path)

+

+tags_choices = ["无", "包括", "排除"]

+tags = {

+ "reviewed": ("已审查", "排除"),

+ "blacklist": ("黑名单", "排除"),

+}

+format_choices = ["包括", "无"]

+formats = ["jpg", "jpeg", "png", "gif", "其它"]

+

+

+def signal_handler(signum, frame):

+ """处理 Ctrl+C 信号"""

+ logger.info("收到终止信号,正在关闭 Gradio 服务器...")

+ sys.exit(0)

+

+

+# 注册信号处理器

+signal.signal(signal.SIGINT, signal_handler)

+required_fields = ["_id", "path", "description", "hash", *tags.keys()] # 修复拼写错误的时候记得把这里的一起改了

+

+emojis_db = list(db.emoji.find({}, {k: 1 for k in required_fields}))

+emoji_filtered = []

+emoji_show = None

+

+max_num = 20

+neglect_update = 0

+

+

+async def get_embedding(text):

+ try:

+ base_url = os.environ.get(f"{embedding_provider}_BASE_URL")

+ if base_url.endswith('/'):

+ url = base_url + 'embeddings'

+ else:

+ url = base_url + '/embeddings'

+ key = os.environ.get(f"{embedding_provider}_KEY")

+ headers = {

+ "Authorization": f"Bearer {key}",

+ "Content-Type": "application/json"

+ }

+ payload = {

+ "model": embedding_name,

+ "input": text,

+ "encoding_format": "float"

+ }

+ response = requests.post(url, headers=headers, data=json.dumps(payload))

+ if response.status_code == 200:

+ result = response.json()

+ embedding = result["data"][0]["embedding"]

+ return embedding

+ else:

+ return f"网络错误{response.status_code}"

+ except Exception:

+ return None

+

+

+def set_max_num(slider):

+ global max_num

+ max_num = slider

+

+

+def filter_emojis(tag_filters, format_filters):

+ global emoji_filtered

+ e_filtered = emojis_db

+

+ format_include = []

+ for format, value in format_filters.items():

+ if value:

+ format_include.append(format)

+

+ if len(format_include) == 0:

+ return []

+

+ for tag, value in tag_filters.items():

+ if value == "包括":

+ e_filtered = [d for d in e_filtered if tag in d]

+ elif value == "排除":

+ e_filtered = [d for d in e_filtered if tag not in d]

+

+ if '其它' in format_include:

+ exclude = [f for f in formats if f not in format_include]

+ if exclude:

+ ff = '|'.join(exclude)

+ compiled_pattern = re.compile(rf"\.({ff})$", re.IGNORECASE)

+ e_filtered = [d for d in e_filtered if not compiled_pattern.search(d.get("path", ""), re.IGNORECASE)]

+ else:

+ ff = '|'.join(format_include)

+ compiled_pattern = re.compile(rf"\.({ff})$", re.IGNORECASE)

+ e_filtered = [d for d in e_filtered if compiled_pattern.search(d.get("path", ""), re.IGNORECASE)]

+

+ emoji_filtered = e_filtered

+

+

+def update_gallery(from_latest, *filter_values):

+ global emoji_filtered

+ tf = filter_values[:len(tags)]

+ ff = filter_values[len(tags):]

+ filter_emojis({k: v for k, v in zip(tags.keys(), tf)}, {k: v for k, v in zip(formats, ff)})

+ if from_latest:

+ emoji_filtered.reverse()

+ if len(emoji_filtered) > max_num:

+ info = f"已筛选{len(emoji_filtered)}个表情包中的{max_num}个。"

+ emoji_filtered = emoji_filtered[:max_num]

+ else:

+ info = f"已筛选{len(emoji_filtered)}个表情包。"

+ global emoji_show

+ emoji_show = None

+ return [gr.update(value=[], selected_index=None, allow_preview=False), info]

+

+

+def update_gallery2():

+ thumbnails = [e.get("path", "") for e in emoji_filtered]

+ return gr.update(value=thumbnails, allow_preview=True)

+

+

+def on_select(evt: gr.SelectData, *tag_values):

+ new_index = evt.index

+ print(new_index)

+ global emoji_show, neglect_update

+ if new_index is None:

+ emoji_show = None

+ targets = []

+ for current_value in tag_values:

+ if current_value:

+ neglect_update += 1

+ targets.append(False)

+ else:

+ targets.append(gr.update())

+ return [

+ gr.update(selected_index=new_index),

+ "",

+ *targets

+ ]

+ else:

+ emoji_show = emoji_filtered[new_index]

+ targets = []

+ neglect_update = 0

+ for current_value, tag in zip(tag_values, tags.keys()):

+ target = tag in emoji_show

+ if current_value != target:

+ neglect_update += 1

+ targets.append(target)

+ else:

+ targets.append(gr.update())

+ return [

+ gr.update(selected_index=new_index),

+ emoji_show.get("description", ""),

+ *targets

+ ]

+

+

+def desc_change(desc, edited):

+ if emoji_show and desc != emoji_show.get("description", ""):

+ if edited:

+ return [gr.update(), True]

+ else:

+ return ["(尚未保存)", True]

+ if edited:

+ return ["", False]

+ else:

+ return [gr.update(), False]

+

+

+def revert_desc():

+ if emoji_show:

+ return emoji_show.get("description", "")

+ else:

+ return ""

+

+

+async def save_desc(desc):

+ if emoji_show:

+ try:

+ yield ["正在构建embedding,请勿关闭页面...", gr.update(interactive=False), gr.update(interactive=False)]

+ embedding = await get_embedding(desc)

+ if embedding is None or isinstance(embedding, str):

+ yield [

+ f"获取embeddings失败!{embedding}",

+ gr.update(interactive=True),

+ gr.update(interactive=True)

+ ]

+ else:

+ e_id = emoji_show["_id"]

+ update_dict = {"$set": {"embedding": embedding, "description": desc}}

+ db.emoji.update_one({"_id": e_id}, update_dict)

+

+ e_hash = emoji_show["hash"]

+ update_dict = {"$set": {"description": desc}}

+ db.images.update_one({"hash": e_hash}, update_dict)

+ db.image_descriptions.update_one({"hash": e_hash}, update_dict)

+ emoji_show["description"] = desc

+

+ logger.info(f'Update description and embeddings: {e_id}(hash={hash})')

+ yield ["保存完成", gr.update(value=desc, interactive=True), gr.update(interactive=True)]

+ except Exception as e:

+ yield [

+ f"出现异常: {e}",

+ gr.update(interactive=True),

+ gr.update(interactive=True)

+ ]

+

+ else:

+ yield ["没有选中表情包", gr.update()]

+

+

+def change_tag(*tag_values):

+ if not emoji_show:

+ return gr.update()

+ global neglect_update

+ if neglect_update > 0:

+ neglect_update -= 1

+ return gr.update()

+ set_dict = {}

+ unset_dict = {}

+ e_id = emoji_show["_id"]

+ for value, tag in zip(tag_values, tags.keys()):

+ if value:

+ if tag not in emoji_show:

+ set_dict[tag] = True

+ emoji_show[tag] = True

+ logger.info(f'Add tag "{tag}" to {e_id}')

+ else:

+ if tag in emoji_show:

+ unset_dict[tag] = ""

+ del emoji_show[tag]

+ logger.info(f'Delete tag "{tag}" from {e_id}')

+

+ update_dict = {"$set": set_dict, "$unset": unset_dict}

+ db.emoji.update_one({"_id": e_id}, update_dict)

+ return "已更新标签状态"

+

+

+with gr.Blocks(title="MaimBot表情包审查器") as app:

+ desc_edit = gr.State(value=False)

+ gr.Markdown(

+ value="""

+ # MaimBot表情包审查器

+ """

+ )

+ gr.Markdown(value="---") # 添加分割线

+ gr.Markdown(value="""

+ ## 审查器说明\n

+ 该审查器用于人工修正识图模型对表情包的识别偏差,以及管理表情包黑名单:\n

+ 每一个表情包都有描述以及“已审查”和“黑名单”两个标签。描述可以编辑并保存。“黑名单”标签可以禁止麦麦使用该表情包。\n

+ 作者:遗世紫丁香(HexatomicRing)

+ """)

+ gr.Markdown(value="---")

+

+ with gr.Row():

+ with gr.Column(scale=2):

+ info_label = gr.Markdown("")

+ gallery = gr.Gallery(label="表情包列表", columns=4, rows=6)

+ description = gr.Textbox(label="描述", interactive=True)

+ description_label = gr.Markdown("")

+ tag_boxes = {

+ tag: gr.Checkbox(label=name, interactive=True)

+ for tag, (name, _) in tags.items()

+ }

+

+ with gr.Row():

+ revert_btn = gr.Button("还原描述")

+ save_btn = gr.Button("保存描述")

+

+ with gr.Column(scale=1):

+ max_num_slider = gr.Slider(label="最大显示数量", minimum=1, maximum=500, value=max_num, interactive=True)

+ check_from_latest = gr.Checkbox(label="由新到旧", interactive=True)

+ tag_filters = {

+ tag: gr.Dropdown(tags_choices, value=value, label=f"{name}筛选")

+ for tag, (name, value) in tags.items()

+ }

+ gr.Markdown(value="---")

+ gr.Markdown(value="格式筛选:")

+ format_filters = {

+ f: gr.Checkbox(label=f, value=True)

+ for f in formats

+ }

+ refresh_btn = gr.Button("刷新筛选")

+ filters = list(tag_filters.values()) + list(format_filters.values())

+

+ max_num_slider.change(set_max_num, max_num_slider, None)

+ description.change(desc_change, [description, desc_edit], [description_label, desc_edit])

+ for component in filters:

+ component.change(

+ fn=update_gallery,

+ inputs=[check_from_latest, *filters],

+ outputs=[gallery, info_label],

+ preprocess=False

+ ).then(

+ fn=update_gallery2,

+ inputs=None,

+ outputs=gallery)

+ refresh_btn.click(

+ fn=update_gallery,

+ inputs=[check_from_latest, *filters],

+ outputs=[gallery, info_label],

+ preprocess=False

+ ).then(

+ fn=update_gallery2,

+ inputs=None,

+ outputs=gallery)

+ gallery.select(fn=on_select, inputs=list(tag_boxes.values()), outputs=[gallery, description, *tag_boxes.values()])

+ revert_btn.click(fn=revert_desc, inputs=None, outputs=description)

+ save_btn.click(fn=save_desc, inputs=description, outputs=[description_label, description, save_btn])

+ for box in tag_boxes.values():

+ box.change(fn=change_tag, inputs=list(tag_boxes.values()), outputs=description_label)

+ app.load(

+ fn=update_gallery,

+ inputs=[check_from_latest, *filters],

+ outputs=[gallery, info_label],

+ preprocess=False

+ ).then(

+ fn=update_gallery2,

+ inputs=None,

+ outputs=gallery)

+ app.queue().launch(

+ server_name="0.0.0.0",

+ inbrowser=True,

+ share=False,

+ server_port=7001,

+ debug=True,

+ quiet=True,

+ )

diff --git a/requirements.txt b/requirements.txt

index 1e9e5ff2..0dfd7514 100644

Binary files a/requirements.txt and b/requirements.txt differ

diff --git a/run.py b/run.py

index cfd3a5f1..43bdcd91 100644

--- a/run.py

+++ b/run.py

@@ -54,9 +54,7 @@ def run_maimbot():

run_cmd(r"napcat\NapCatWinBootMain.exe 10001", False)

if not os.path.exists(r"mongodb\db"):

os.makedirs(r"mongodb\db")

- run_cmd(

- r"mongodb\bin\mongod.exe --dbpath=" + os.getcwd() + r"\mongodb\db --port 27017"

- )

+ run_cmd(r"mongodb\bin\mongod.exe --dbpath=" + os.getcwd() + r"\mongodb\db --port 27017")

run_cmd("nb run")

@@ -70,30 +68,29 @@ def install_mongodb():

stream=True,

)

total = int(resp.headers.get("content-length", 0)) # 计算文件大小

- with open("mongodb.zip", "w+b") as file, tqdm( # 展示下载进度条,并解压文件

- desc="mongodb.zip",

- total=total,

- unit="iB",

- unit_scale=True,

- unit_divisor=1024,

- ) as bar:

+ with (

+ open("mongodb.zip", "w+b") as file,

+ tqdm( # 展示下载进度条,并解压文件

+ desc="mongodb.zip",

+ total=total,

+ unit="iB",

+ unit_scale=True,

+ unit_divisor=1024,

+ ) as bar,

+ ):

for data in resp.iter_content(chunk_size=1024):

size = file.write(data)

bar.update(size)

extract_files("mongodb.zip", "mongodb")

print("MongoDB 下载完成")

os.remove("mongodb.zip")

- choice = input(

- "是否安装 MongoDB Compass?此软件可以以可视化的方式修改数据库,建议安装(Y/n)"

- ).upper()

+ choice = input("是否安装 MongoDB Compass?此软件可以以可视化的方式修改数据库,建议安装(Y/n)").upper()

if choice == "Y" or choice == "":

install_mongodb_compass()

def install_mongodb_compass():

- run_cmd(

- r"powershell Start-Process powershell -Verb runAs 'Set-ExecutionPolicy RemoteSigned'"

- )

+ run_cmd(r"powershell Start-Process powershell -Verb runAs 'Set-ExecutionPolicy RemoteSigned'")

input("请在弹出的用户账户控制中点击“是”后按任意键继续安装")

run_cmd(r"powershell mongodb\bin\Install-Compass.ps1")

input("按任意键启动麦麦")

@@ -107,7 +104,7 @@ def install_napcat():

napcat_filename = input(

"下载完成后请把文件复制到此文件夹,并将**不包含后缀的文件名**输入至此窗口,如 NapCat.32793.Shell:"

)

- if(napcat_filename[-4:] == ".zip"):

+ if napcat_filename[-4:] == ".zip":

napcat_filename = napcat_filename[:-4]

extract_files(napcat_filename + ".zip", "napcat")

print("NapCat 安装完成")

@@ -121,11 +118,7 @@ if __name__ == "__main__":

print("按任意键退出")

input()

exit(1)

- choice = input(

- "请输入要进行的操作:\n"

- "1.首次安装\n"

- "2.运行麦麦\n"

- )

+ choice = input("请输入要进行的操作:\n1.首次安装\n2.运行麦麦\n")

os.system("cls")

if choice == "1":

confirm = input("首次安装将下载并配置所需组件\n1.确认\n2.取消\n")

diff --git a/script/run_thingking.bat b/script/run_thingking.bat

index a134da6f..0806e46e 100644

--- a/script/run_thingking.bat

+++ b/script/run_thingking.bat

@@ -1,5 +1,5 @@

-call conda activate niuniu

-cd src\gui

-start /b python reasoning_gui.py

+@REM call conda activate niuniu

+cd ../src\gui

+start /b ../../venv/scripts/python.exe reasoning_gui.py

exit

diff --git a/setup.py b/setup.py

index 2598a38a..6222dbb5 100644

--- a/setup.py

+++ b/setup.py

@@ -5,7 +5,7 @@ setup(

version="0.1",

packages=find_packages(),

install_requires=[

- 'python-dotenv',

- 'pymongo',

+ "python-dotenv",

+ "pymongo",

],

-)

\ No newline at end of file

+)

diff --git a/src/common/__init__.py b/src/common/__init__.py

index 9a8a345d..497b4a41 100644

--- a/src/common/__init__.py

+++ b/src/common/__init__.py

@@ -1 +1 @@

-# 这个文件可以为空,但必须存在

\ No newline at end of file

+# 这个文件可以为空,但必须存在

diff --git a/src/common/database.py b/src/common/database.py

index cd149e52..a3e5b4e3 100644

--- a/src/common/database.py

+++ b/src/common/database.py

@@ -1,5 +1,4 @@

import os

-from typing import cast

from pymongo import MongoClient

from pymongo.database import Database

@@ -11,7 +10,7 @@ def __create_database_instance():

uri = os.getenv("MONGODB_URI")

host = os.getenv("MONGODB_HOST", "127.0.0.1")

port = int(os.getenv("MONGODB_PORT", "27017"))

- db_name = os.getenv("DATABASE_NAME", "MegBot")

+ # db_name 变量在创建连接时不需要,在获取数据库实例时才使用

username = os.getenv("MONGODB_USERNAME")

password = os.getenv("MONGODB_PASSWORD")

auth_source = os.getenv("MONGODB_AUTH_SOURCE")

diff --git a/src/common/logger.py b/src/common/logger.py

index c546b700..45d6f415 100644

--- a/src/common/logger.py

+++ b/src/common/logger.py

@@ -5,8 +5,11 @@ import os

from types import ModuleType

from pathlib import Path

from dotenv import load_dotenv

+# from ..plugins.chat.config import global_config

-load_dotenv()

+# 加载 .env.prod 文件

+env_path = Path(__file__).resolve().parent.parent.parent / ".env.prod"

+load_dotenv(dotenv_path=env_path)

# 保存原生处理器ID

default_handler_id = None

@@ -28,32 +31,159 @@ _handler_registry: Dict[str, List[int]] = {}

current_file_path = Path(__file__).resolve()

LOG_ROOT = "logs"

-# 默认全局配置

-DEFAULT_CONFIG = {

- # 日志级别配置

- "console_level": "INFO",

- "file_level": "DEBUG",

+SIMPLE_OUTPUT = os.getenv("SIMPLE_OUTPUT", "false")

+print(f"SIMPLE_OUTPUT: {SIMPLE_OUTPUT}")

- # 格式配置

- "console_format": (

- "{time:YYYY-MM-DD HH:mm:ss} | "

- "{level: <8} | "

- "{extra[module]: <12} | "

- "{message} "

- ),

- "file_format": (

- "{time:YYYY-MM-DD HH:mm:ss} | "

- "{level: <8} | "

- "{extra[module]: <15} | "

- "{message}"

- ),

- "log_dir": LOG_ROOT,

- "rotation": "00:00",

- "retention": "3 days",

- "compression": "zip",

+if not SIMPLE_OUTPUT:

+ # 默认全局配置

+ DEFAULT_CONFIG = {

+ # 日志级别配置

+ "console_level": "INFO",

+ "file_level": "DEBUG",

+ # 格式配置

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | {message}"),

+ "log_dir": LOG_ROOT,

+ "rotation": "00:00",

+ "retention": "3 days",

+ "compression": "zip",

+ }

+else:

+ DEFAULT_CONFIG = {

+ # 日志级别配置

+ "console_level": "INFO",

+ "file_level": "DEBUG",

+ # 格式配置

+ "console_format": ("{time:MM-DD HH:mm} | {extra[module]} | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | {message}"),

+ "log_dir": LOG_ROOT,

+ "rotation": "00:00",

+ "retention": "3 days",

+ "compression": "zip",

+ }

+

+

+# 海马体日志样式配置

+MEMORY_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "海马体 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 海马体 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 海马体 | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 海马体 | {message}"),

+ },

}

+#MOOD

+MOOD_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "心情 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 心情 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 心情 | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 心情 | {message}"),

+ },

+}

+

+SENDER_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "消息发送 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 消息发送 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 消息发送 | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 消息发送 | {message}"),

+ },

+}

+

+LLM_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "麦麦组织语言 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 麦麦组织语言 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 麦麦组织语言 | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 麦麦组织语言 | {message}"),

+ },

+}

+

+

+# Topic日志样式配置

+TOPIC_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "话题 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 话题 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 主题 | {message}"),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 话题 | {message}"),

+ },

+}

+

+# Topic日志样式配置

+CHAT_STYLE_CONFIG = {

+ "advanced": {

+ "console_format": (

+ "{time:YYYY-MM-DD HH:mm:ss} | "

+ "{level: <8} | "

+ "{extra[module]: <12} | "

+ "见闻 | "

+ "{message} "

+ ),

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 见闻 | {message}"),

+ },

+ "simple": {

+ "console_format": ("{time:MM-DD HH:mm} | 见闻 | {message} "), # noqa: E501

+ "file_format": ("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {extra[module]: <15} | 见闻 | {message}"),

+ },

+}

+

+# 根据SIMPLE_OUTPUT选择配置

+MEMORY_STYLE_CONFIG = MEMORY_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else MEMORY_STYLE_CONFIG["advanced"]

+TOPIC_STYLE_CONFIG = TOPIC_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else TOPIC_STYLE_CONFIG["advanced"]

+SENDER_STYLE_CONFIG = SENDER_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else SENDER_STYLE_CONFIG["advanced"]

+LLM_STYLE_CONFIG = LLM_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else LLM_STYLE_CONFIG["advanced"]

+CHAT_STYLE_CONFIG = CHAT_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else CHAT_STYLE_CONFIG["advanced"]

+MOOD_STYLE_CONFIG = MOOD_STYLE_CONFIG["simple"] if SIMPLE_OUTPUT else MOOD_STYLE_CONFIG["advanced"]

+

def is_registered_module(record: dict) -> bool:

"""检查是否为已注册的模块"""

return record["extra"].get("module") in _handler_registry

@@ -93,12 +223,12 @@ class LogConfig:

def get_module_logger(

- module: Union[str, ModuleType],

- *,

- console_level: Optional[str] = None,

- file_level: Optional[str] = None,

- extra_handlers: Optional[List[dict]] = None,

- config: Optional[LogConfig] = None

+ module: Union[str, ModuleType],

+ *,

+ console_level: Optional[str] = None,

+ file_level: Optional[str] = None,

+ extra_handlers: Optional[List[dict]] = None,

+ config: Optional[LogConfig] = None,

) -> LoguruLogger:

module_name = module if isinstance(module, str) else module.__name__

current_config = config.config if config else DEFAULT_CONFIG

@@ -124,7 +254,7 @@ def get_module_logger(

# 文件处理器

log_dir = Path(current_config["log_dir"])

log_dir.mkdir(parents=True, exist_ok=True)

- log_file = log_dir / module_name / f"{{time:YYYY-MM-DD}}.log"

+ log_file = log_dir / module_name / "{time:YYYY-MM-DD}.log"

log_file.parent.mkdir(parents=True, exist_ok=True)

file_id = logger.add(

@@ -161,6 +291,7 @@ def remove_module_logger(module_name: str) -> None:

# 添加全局默认处理器(只处理未注册模块的日志--->控制台)

+# print(os.getenv("DEFAULT_CONSOLE_LOG_LEVEL", "SUCCESS"))

DEFAULT_GLOBAL_HANDLER = logger.add(

sink=sys.stderr,

level=os.getenv("DEFAULT_CONSOLE_LOG_LEVEL", "SUCCESS"),

@@ -170,7 +301,7 @@ DEFAULT_GLOBAL_HANDLER = logger.add(

"{name: <12} | "

"{message} "

),

- filter=is_unregistered_module, # 只处理未注册模块的日志

+ filter=lambda record: is_unregistered_module(record), # 只处理未注册模块的日志,并过滤nonebot

enqueue=True,

)

@@ -181,18 +312,13 @@ other_log_dir = log_dir / "other"

other_log_dir.mkdir(parents=True, exist_ok=True)

DEFAULT_FILE_HANDLER = logger.add(

- sink=str(other_log_dir / f"{{time:YYYY-MM-DD}}.log"),

+ sink=str(other_log_dir / "{time:YYYY-MM-DD}.log"),

level=os.getenv("DEFAULT_FILE_LOG_LEVEL", "DEBUG"),

- format=(

- "{time:YYYY-MM-DD HH:mm:ss} | "

- "{level: <8} | "

- "{name: <15} | "

- "{message}"

- ),

+ format=("{time:YYYY-MM-DD HH:mm:ss} | {level: <8} | {name: <15} | {message}"),

rotation=DEFAULT_CONFIG["rotation"],

retention=DEFAULT_CONFIG["retention"],

compression=DEFAULT_CONFIG["compression"],

encoding="utf-8",

- filter=is_unregistered_module, # 只处理未注册模块的日志

+ filter=lambda record: is_unregistered_module(record), # 只处理未注册模块的日志,并过滤nonebot

enqueue=True,

)

diff --git a/src/gui/reasoning_gui.py b/src/gui/reasoning_gui.py

index b7a0fc08..43f692d5 100644

--- a/src/gui/reasoning_gui.py

+++ b/src/gui/reasoning_gui.py

@@ -6,6 +6,8 @@ import time

from datetime import datetime

from typing import Dict, List

from typing import Optional

+sys.path.insert(0, sys.path[0]+"/../")

+sys.path.insert(0, sys.path[0]+"/../")

from src.common.logger import get_module_logger

import customtkinter as ctk

@@ -16,16 +18,16 @@ logger = get_module_logger("gui")

# 获取当前文件的目录

current_dir = os.path.dirname(os.path.abspath(__file__))

# 获取项目根目录

-root_dir = os.path.abspath(os.path.join(current_dir, '..', '..'))

+root_dir = os.path.abspath(os.path.join(current_dir, "..", ".."))

sys.path.insert(0, root_dir)

-from src.common.database import db

+from src.common.database import db # noqa: E402

# 加载环境变量

-if os.path.exists(os.path.join(root_dir, '.env.dev')):

- load_dotenv(os.path.join(root_dir, '.env.dev'))

+if os.path.exists(os.path.join(root_dir, ".env.dev")):

+ load_dotenv(os.path.join(root_dir, ".env.dev"))

logger.info("成功加载开发环境配置")

-elif os.path.exists(os.path.join(root_dir, '.env.prod')):

- load_dotenv(os.path.join(root_dir, '.env.prod'))

+elif os.path.exists(os.path.join(root_dir, ".env.prod")):

+ load_dotenv(os.path.join(root_dir, ".env.prod"))

logger.info("成功加载生产环境配置")

else:

logger.error("未找到环境配置文件")

@@ -44,8 +46,8 @@ class ReasoningGUI:

# 创建主窗口

self.root = ctk.CTk()

- self.root.title('麦麦推理')

- self.root.geometry('800x600')

+ self.root.title("麦麦推理")

+ self.root.geometry("800x600")

self.root.protocol("WM_DELETE_WINDOW", self._on_closing)

# 存储群组数据

@@ -107,12 +109,7 @@ class ReasoningGUI:

self.control_frame = ctk.CTkFrame(self.frame)

self.control_frame.pack(fill="x", padx=10, pady=5)

- self.clear_button = ctk.CTkButton(

- self.control_frame,

- text="清除显示",

- command=self.clear_display,

- width=120

- )

+ self.clear_button = ctk.CTkButton(self.control_frame, text="清除显示", command=self.clear_display, width=120)

self.clear_button.pack(side="left", padx=5)

# 启动自动更新线程

@@ -132,10 +129,10 @@ class ReasoningGUI:

try:

while True:

task = self.update_queue.get_nowait()

- if task['type'] == 'update_group_list':

+ if task["type"] == "update_group_list":

self._update_group_list_gui()

- elif task['type'] == 'update_display':

- self._update_display_gui(task['group_id'])

+ elif task["type"] == "update_display":

+ self._update_display_gui(task["group_id"])

except queue.Empty:

pass

finally:

@@ -157,7 +154,7 @@ class ReasoningGUI:

width=160,

height=30,

corner_radius=8,

- command=lambda gid=group_id: self._on_group_select(gid)

+ command=lambda gid=group_id: self._on_group_select(gid),

)

button.pack(pady=2, padx=5)

self.group_buttons[group_id] = button

@@ -190,7 +187,7 @@ class ReasoningGUI:

self.content_text.delete("1.0", "end")

for item in self.group_data[group_id]:

# 时间戳

- time_str = item['time'].strftime("%Y-%m-%d %H:%M:%S")

+ time_str = item["time"].strftime("%Y-%m-%d %H:%M:%S")

self.content_text.insert("end", f"[{time_str}]\n", "timestamp")

# 用户信息

@@ -207,9 +204,9 @@ class ReasoningGUI:

# Prompt内容

self.content_text.insert("end", "Prompt内容:\n", "timestamp")

- prompt_text = item.get('prompt', '')

- if prompt_text and prompt_text.lower() != 'none':

- lines = prompt_text.split('\n')

+ prompt_text = item.get("prompt", "")

+ if prompt_text and prompt_text.lower() != "none":

+ lines = prompt_text.split("\n")

for line in lines:

if line.strip():

self.content_text.insert("end", " " + line + "\n", "prompt")

@@ -218,9 +215,9 @@ class ReasoningGUI:

# 推理过程

self.content_text.insert("end", "推理过程:\n", "timestamp")

- reasoning_text = item.get('reasoning', '')

- if reasoning_text and reasoning_text.lower() != 'none':

- lines = reasoning_text.split('\n')

+ reasoning_text = item.get("reasoning", "")

+ if reasoning_text and reasoning_text.lower() != "none":

+ lines = reasoning_text.split("\n")

for line in lines:

if line.strip():

self.content_text.insert("end", " " + line + "\n", "reasoning")

@@ -260,28 +257,30 @@ class ReasoningGUI:

logger.debug(f"记录时间: {item['time']}, 类型: {type(item['time'])}")

total_count += 1

- group_id = str(item.get('group_id', 'unknown'))

+ group_id = str(item.get("group_id", "unknown"))

if group_id not in new_data:

new_data[group_id] = []

# 转换时间戳为datetime对象

- if isinstance(item['time'], (int, float)):

- time_obj = datetime.fromtimestamp(item['time'])

- elif isinstance(item['time'], datetime):

- time_obj = item['time']

+ if isinstance(item["time"], (int, float)):

+ time_obj = datetime.fromtimestamp(item["time"])

+ elif isinstance(item["time"], datetime):

+ time_obj = item["time"]

else:

logger.warning(f"未知的时间格式: {type(item['time'])}")

time_obj = datetime.now() # 使用当前时间作为后备

- new_data[group_id].append({

- 'time': time_obj,

- 'user': item.get('user', '未知'),

- 'message': item.get('message', ''),

- 'model': item.get('model', '未知'),

- 'reasoning': item.get('reasoning', ''),

- 'response': item.get('response', ''),

- 'prompt': item.get('prompt', '') # 添加prompt字段

- })

+ new_data[group_id].append(

+ {

+ "time": time_obj,

+ "user": item.get("user", "未知"),

+ "message": item.get("message", ""),

+ "model": item.get("model", "未知"),

+ "reasoning": item.get("reasoning", ""),

+ "response": item.get("response", ""),

+ "prompt": item.get("prompt", ""), # 添加prompt字段

+ }

+ )

logger.info(f"从数据库加载了 {total_count} 条记录,分布在 {len(new_data)} 个群组中")

@@ -290,15 +289,12 @@ class ReasoningGUI:

self.group_data = new_data

logger.info("数据已更新,正在刷新显示...")

# 将更新任务添加到队列

- self.update_queue.put({'type': 'update_group_list'})

+ self.update_queue.put({"type": "update_group_list"})

if self.group_data:

# 如果没有选中的群组,选择最新的群组

if not self.selected_group_id or self.selected_group_id not in self.group_data:

self.selected_group_id = next(iter(self.group_data))

- self.update_queue.put({

- 'type': 'update_display',

- 'group_id': self.selected_group_id

- })

+ self.update_queue.put({"type": "update_display", "group_id": self.selected_group_id})

except Exception:

logger.exception("自动更新出错")

diff --git a/src/plugins/chat/Segment_builder.py b/src/plugins/chat/Segment_builder.py

index ed75f709..8bd3279b 100644

--- a/src/plugins/chat/Segment_builder.py

+++ b/src/plugins/chat/Segment_builder.py

@@ -10,51 +10,47 @@ for sending through bots that implement the OneBot interface.

"""

-

class Segment:

"""Base class for all message segments."""

-

+

def __init__(self, type_: str, data: Dict[str, Any]):

self.type = type_

self.data = data

-

+

def to_dict(self) -> Dict[str, Any]:

"""Convert the segment to a dictionary format."""

- return {

- "type": self.type,

- "data": self.data

- }

+ return {"type": self.type, "data": self.data}

class Text(Segment):

"""Text message segment."""

-

+

def __init__(self, text: str):

super().__init__("text", {"text": text})

class Face(Segment):

"""Face/emoji message segment."""

-

+

def __init__(self, face_id: int):

super().__init__("face", {"id": str(face_id)})

class Image(Segment):

"""Image message segment."""

-

+

@classmethod

- def from_url(cls, url: str) -> 'Image':

+ def from_url(cls, url: str) -> "Image":

"""Create an Image segment from a URL."""

return cls(url=url)

-

+

@classmethod

- def from_path(cls, path: str) -> 'Image':

+ def from_path(cls, path: str) -> "Image":

"""Create an Image segment from a file path."""

- with open(path, 'rb') as f:

- file_b64 = base64.b64encode(f.read()).decode('utf-8')

+ with open(path, "rb") as f:

+ file_b64 = base64.b64encode(f.read()).decode("utf-8")

return cls(file=f"base64://{file_b64}")

-

+

def __init__(self, file: str = None, url: str = None, cache: bool = True):

data = {}

if file:

@@ -68,7 +64,7 @@ class Image(Segment):

class At(Segment):

"""@Someone message segment."""

-

+

def __init__(self, user_id: Union[int, str]):

data = {"qq": str(user_id)}

super().__init__("at", data)

@@ -76,7 +72,7 @@ class At(Segment):

class Record(Segment):

"""Voice message segment."""

-

+

def __init__(self, file: str, magic: bool = False, cache: bool = True):

data = {"file": file}

if magic:

@@ -88,59 +84,59 @@ class Record(Segment):

class Video(Segment):

"""Video message segment."""

-

+

def __init__(self, file: str):

super().__init__("video", {"file": file})

class Reply(Segment):

"""Reply message segment."""

-

+

def __init__(self, message_id: int):

super().__init__("reply", {"id": str(message_id)})

class MessageBuilder:

"""Helper class for building complex messages."""

-

+

def __init__(self):

self.segments: List[Segment] = []

-

- def text(self, text: str) -> 'MessageBuilder':

+

+ def text(self, text: str) -> "MessageBuilder":

"""Add a text segment."""

self.segments.append(Text(text))

return self

-

- def face(self, face_id: int) -> 'MessageBuilder':

+

+ def face(self, face_id: int) -> "MessageBuilder":

"""Add a face/emoji segment."""

self.segments.append(Face(face_id))

return self

-

- def image(self, file: str = None) -> 'MessageBuilder':

+

+ def image(self, file: str = None) -> "MessageBuilder":

"""Add an image segment."""

self.segments.append(Image(file=file))

return self

-

- def at(self, user_id: Union[int, str]) -> 'MessageBuilder':

+

+ def at(self, user_id: Union[int, str]) -> "MessageBuilder":

"""Add an @someone segment."""

self.segments.append(At(user_id))

return self

-

- def record(self, file: str, magic: bool = False) -> 'MessageBuilder':

+

+ def record(self, file: str, magic: bool = False) -> "MessageBuilder":

"""Add a voice record segment."""

self.segments.append(Record(file, magic))

return self

-

- def video(self, file: str) -> 'MessageBuilder':

+

+ def video(self, file: str) -> "MessageBuilder":

"""Add a video segment."""

self.segments.append(Video(file))

return self

-

- def reply(self, message_id: int) -> 'MessageBuilder':

+

+ def reply(self, message_id: int) -> "MessageBuilder":

"""Add a reply segment."""

self.segments.append(Reply(message_id))

return self

-

+

def build(self) -> List[Dict[str, Any]]:

"""Build the message into a list of segment dictionaries."""

return [segment.to_dict() for segment in self.segments]

@@ -161,4 +157,4 @@ def image_path(path: str) -> Dict[str, Any]:

def at(user_id: Union[int, str]) -> Dict[str, Any]:

"""Create an @someone message segment."""

- return At(user_id).to_dict()'''

\ No newline at end of file

+ return At(user_id).to_dict()'''

diff --git a/src/plugins/chat/__init__.py b/src/plugins/chat/__init__.py

index 75c7b452..f51184a7 100644

--- a/src/plugins/chat/__init__.py

+++ b/src/plugins/chat/__init__.py

@@ -1,10 +1,8 @@

import asyncio

import time

-import os

from nonebot import get_driver, on_message, on_notice, require

-from nonebot.rule import to_me

-from nonebot.adapters.onebot.v11 import Bot, GroupMessageEvent, Message, MessageSegment, MessageEvent, NoticeEvent

+from nonebot.adapters.onebot.v11 import Bot, MessageEvent, NoticeEvent

from nonebot.typing import T_State

from ..moods.moods import MoodManager # 导入情绪管理器

@@ -16,11 +14,12 @@ from .emoji_manager import emoji_manager

from .relationship_manager import relationship_manager

from ..willing.willing_manager import willing_manager

from .chat_stream import chat_manager

-from ..memory_system.memory import hippocampus, memory_graph

-from .bot import ChatBot

+from ..memory_system.memory import hippocampus

from .message_sender import message_manager, message_sender

from .storage import MessageStorage

from src.common.logger import get_module_logger

+from src.think_flow_demo.outer_world import outer_world

+from src.think_flow_demo.heartflow import subheartflow_manager

logger = get_module_logger("chat_init")

@@ -36,10 +35,9 @@ config = driver.config

# 初始化表情管理器

emoji_manager.initialize()

-

-logger.debug(f"正在唤醒{global_config.BOT_NICKNAME}......")

-# 创建机器人实例

-chat_bot = ChatBot()

+logger.success("--------------------------------")

+logger.success(f"正在唤醒{global_config.BOT_NICKNAME}......使用版本:{global_config.MAI_VERSION}")

+logger.success("--------------------------------")

# 注册消息处理器

msg_in = on_message(priority=5)

# 注册和bot相关的通知处理器

@@ -48,6 +46,20 @@ notice_matcher = on_notice(priority=1)

scheduler = require("nonebot_plugin_apscheduler").scheduler

+async def start_think_flow():

+ """启动外部世界"""

+ try:

+ outer_world_task = asyncio.create_task(outer_world.open_eyes())

+ logger.success("大脑和外部世界启动成功")

+ # 启动心流系统

+ heartflow_task = asyncio.create_task(subheartflow_manager.heartflow_start_working())

+ logger.success("心流系统启动成功")

+ return outer_world_task, heartflow_task

+ except Exception as e: